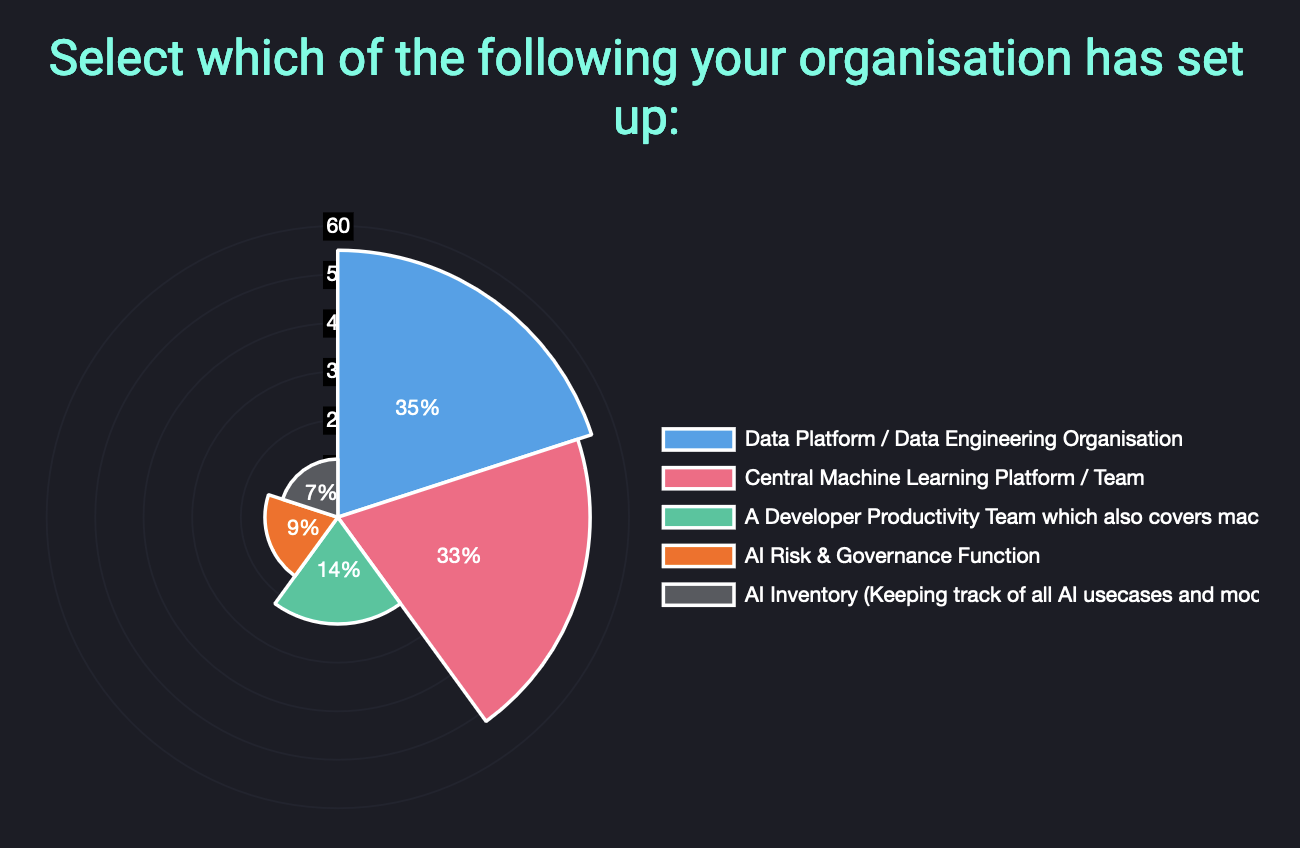

| MLOps Organisational Setups Central functions support machine learning ambitions across organisations; only ~30% of organisations have a central Data / ML platform, and less than 10% have central AI Risk Governance: Establishing central functions to support teams is a growing trend in machine learning maturity across organisations, an we see some interesting trends, with less than 10% or organisations with a central "AI Inventory", or an "AI Risk & Governance Function"; less than 15% with a central dev productivity function, and; slightly over 30% having a central Data Platform or central ML Platform 💻 We are uncovering great insights as part of our survey on The State of Production ML in 2024; please contribute to this valuable investigation on machine learning tools and platforms used in your production ML development. Your input will help create a comprehensive overview of common practices, tooling preferences, and challenges faced when deploying models to production, ultimately benefiting the entire ML community 🚀 |

|

|

|---|

|

National Regulation on AI We have overhauled our repository of AI Regulation, Principles & Guidelines, which now contains a listing of major developments of national AI strategies, regulations and guidelines: The latest iteration of this github list provides an overview of global AI regulation, highlighting key resources from economic areas such as like the European Union's AI Act, the United Statesxecutive Order on AI, China's Interim Measures for Generative AI Services, and the UK's pro-innovation regulatory approach - between many others. As always it's an open source initiative so if there are any developments that are not included please do feel free to contribute with a PR! |

|

|

|---|

|

Netflix's Time Series Infrastructure The scale that Netflix has to deal with is massive, and was reflected in last week's global streaming issues - they share one of their approaches to scaling, including their TimeSeries Data Abstraction Layer to efficiently store and query massive volumes of immutable temporal event data: This is quite an in-depth walkthrough into Netflix's architecture to handle up to 10 million writes per second and petabytes of data with low millisecond latency by using temporal partitioning and event bucketing strategies as part of their TimeSeries Abstraction Layer. They have been able to build flexible storage backends like Cassandra and Elasticsearch and tunable configurations for scalability and cost efficiency which is critical for internal use-cases such as production machine learning at massive scale. |

|

|---|

|

GenAI Increasing Tech Debt Contrary to the belief that AI improves dev quality, we are starting to see growing resources showcasing how it actually amplifies tech debt costs: This is an insightful resource that shows how GenAI can widen the productivity gap between "low tech-debt" and "high tech-debt" codebases. Generative AI tools tend to be useful with simple, modular, and ubiquituously developed systems; however, they struggle with complex or legacy codebases that contain tech debt, often making it hard to leverage AI effectively. |

|

|

|---|

|

What is an AI Engineer? The rise of generative AI and Large Language Models has transformed the role of the AI Engineer into a high-demand position that blends deep technical expertise with strategic business insight; but what does it actually consist of? This is a great resource from Gradient Flow that dives into providing some definition on the role that companies are seeking as AI engineers; this often consist technical practitioners who can develop and fine-tune LLMs for domain-specific applications, integrate them into scalable production systems using skills in Python, AI frameworks like PyTorch and TensorFlow. However this also extends to cloud platforms and MLOps practices - from a more personal perspective this seems to me like the role itself is still nascent and this would have to be broken down into sub-roles, such as data engineering, ML engineering, MLOps engineering and domain expertise, instead of fitting all into a single role. |

|

|

|---|

|

Upcoming MLOps Events The MLOps ecosystem continues to grow at break-neck speeds, making it ever harder for us as practitioners to stay up to date with relevant developments. A fantsatic way to keep on-top of relevant resources is through the great community and events that the MLOps and Production ML ecosystem offers. This is the reason why we have started curating a list of upcoming events in the space, which are outlined below. Upcoming conferences where we're speaking:

Other upcoming MLOps conferences in 2024:

In case you missed our talks:

|

|

|---|

| | |

Check out the fast-growing ecosystem of production ML tools & frameworks at the github repository which has reached over 10,000 ⭐ github stars. We are currently looking for more libraries to add - if you know of any that are not listed, please let us know or feel free to add a PR. Four featured libraries in the GPU acceleration space are outlined below. - Kompute - Blazing fast, lightweight and mobile phone-enabled GPU compute framework optimized for advanced data processing usecases.

- CuPy - An implementation of NumPy-compatible multi-dimensional array on CUDA. CuPy consists of the core multi-dimensional array class, cupy.ndarray, and many functions on it.

- Jax - Composable transformations of Python+NumPy programs: differentiate, vectorize, JIT to GPU/TPU, and more

- CuDF - Built based on the Apache Arrow columnar memory format, cuDF is a GPU DataFrame library for loading, joining, aggregating, filtering, and otherwise manipulating data.

If you know of any open source and open community events that are not listed do give us a heads up so we can add them! |

|

|---|

| | |

As AI systems become more prevalent in society, we face bigger and tougher societal challenges. We have seen a large number of resources that aim to takle these challenges in the form of AI Guidelines, Principles, Ethics Frameworks, etc, however there are so many resources it is hard to navigate. Because of this we started an Open Source initiative that aims to map the ecosystem to make it simpler to navigate. You can find multiple principles in the repo - some examples include the following: - MLSecOps Top 10 Vulnerabilities - This is an initiative that aims to further the field of machine learning security by identifying the top 10 most common vulnerabiliites in the machine learning lifecycle as well as best practices.

- AI & Machine Learning 8 principles for Responsible ML - The Institute for Ethical AI & Machine Learning has put together 8 principles for responsible machine learning that are to be adopted by individuals and delivery teams designing, building and operating machine learning systems.

- An Evaluation of Guidelines - The Ethics of Ethics; A research paper that analyses multiple Ethics principles.

- ACM's Code of Ethics and Professional Conduct - This is the code of ethics that has been put together in 1992 by the Association for Computer Machinery and updated in 2018.

If you know of any guidelines that are not in the "Awesome AI Guidelines" list, please do give us a heads up or feel free to add a pull request!

|

|

|---|

| | |

| | | | The Institute for Ethical AI & Machine Learning is a European research centre that carries out world-class research into responsible machine learning. | | | | |

|

|

|---|

|

|

This email was sent to You received this email because you are registered with The Institute for Ethical AI & Machine Learning's newsletter "The Machine Learning Engineer"

|

| | | | |

|

|

|---|

|

© 2023 The Institute for Ethical AI & Machine Learning |

|

|---|

|

|

|

The Institute for Ethical AI & Machine Learning

The Institute for Ethical AI & Machine Learning